Advertising Software Development Kit (SDK): serving up more than just in-app ads and logging sensitive data.

Dave Bittner: Hello everyone, and welcome to the CyberWire's Research Saturday. I'm Dave Bittner, and this is our weekly conversation with researchers and analysts tracking down threats and vulnerabilities, solving some of the hard problems of protecting ourselves in a rapidly evolving cyberspace. Thanks for joining us.

Alyssa Miller: So, we actually had a partner reach out to us and they actually asked for our help because they were seeing – they wanted us to help them just research some weird behaviors they were seeing related to this particular SDK.

Dave Bittner: That's Alyssa Miller. She's an Application Security Advocate at SNYK. The research we're discussing today is titled, "SourMint: iOS remote code execution, Android findings, and community response."

Alyssa Miller: We do have a pretty strong research team here at SNYK that is normally focused on just open-source research in terms of identifying new vulnerabilities and so forth. So, a little outside our wheelhouse, but still a lot of what we do on a day-to-day basis.

Alyssa Miller: So, we did dig into the SDK, and at the time our initial focus was centered around the iOS version of the SDK. And as we got into it, that's when we started finding just all the fingerprints that you would expect to see from someone that's got some form of malicious content going on in our code. Things that were obfuscated in weird ways, and then you dig into that further and suddenly you start to see command-and-control functions and things like that. And it just – it sort of all unfolded from there.

Dave Bittner: Yeah. Well, I mean, let's start from the beginning here. I mean, this is an SDK for iOS – an iOS version is what you initially looked into. Give us a little of the lay of the land here. What is it intended to do? Who are the providers and what are they saying that it does?

Alyssa Miller: Sure. So, the Mintegral SDK is provided by a company called Mintegral. And I mean, really, it's an app monetization platform. So, in other words, your developers may go out and they can sign up for this SDK, and they add it to their application and it will serve in-app ads. And as their users click on those ads and take different actions as a result of those ads, those clicks get attributed to the app owner and the Mintegral SDK, and the advertisers pay their fees based on that click traffic. And that ultimately is how a developer can get paid for the ads in their application.

Alyssa Miller: Now, a developer might include the SDK directly, so they may just sign up for Mintegral and download the SDK and add it to their application. Or sometimes what will happen as well, is that developers will work with ad moderators, I think the term for it. They'll actually bring in multiple SDKs – so, different ad networks. And so, it might include Mintegral and it might include a few other options, all in one package, so devs can pull in a bunch of different advertising networks all together into their app much easier, and then deploy that. And we did indeed see that with Mintegral, that they were involved in numerous ad networks.

Dave Bittner: So, that's what it is supposed to do. And I suppose on the surface level, that's what it appears to do. But what did your research uncover here?

Alyssa Miller: So, initially there were two things that we found that were kind of malicious – one in particular, and one suspiciously malicious, right? So, the first thing we found – and we worked with some people in the ad attribution space. So, think about an app where you have multiple SDKs installed and someone clicks on one of those ads. Well, on the backend, someone has to attribute that to which ad network that click came from, in which application.

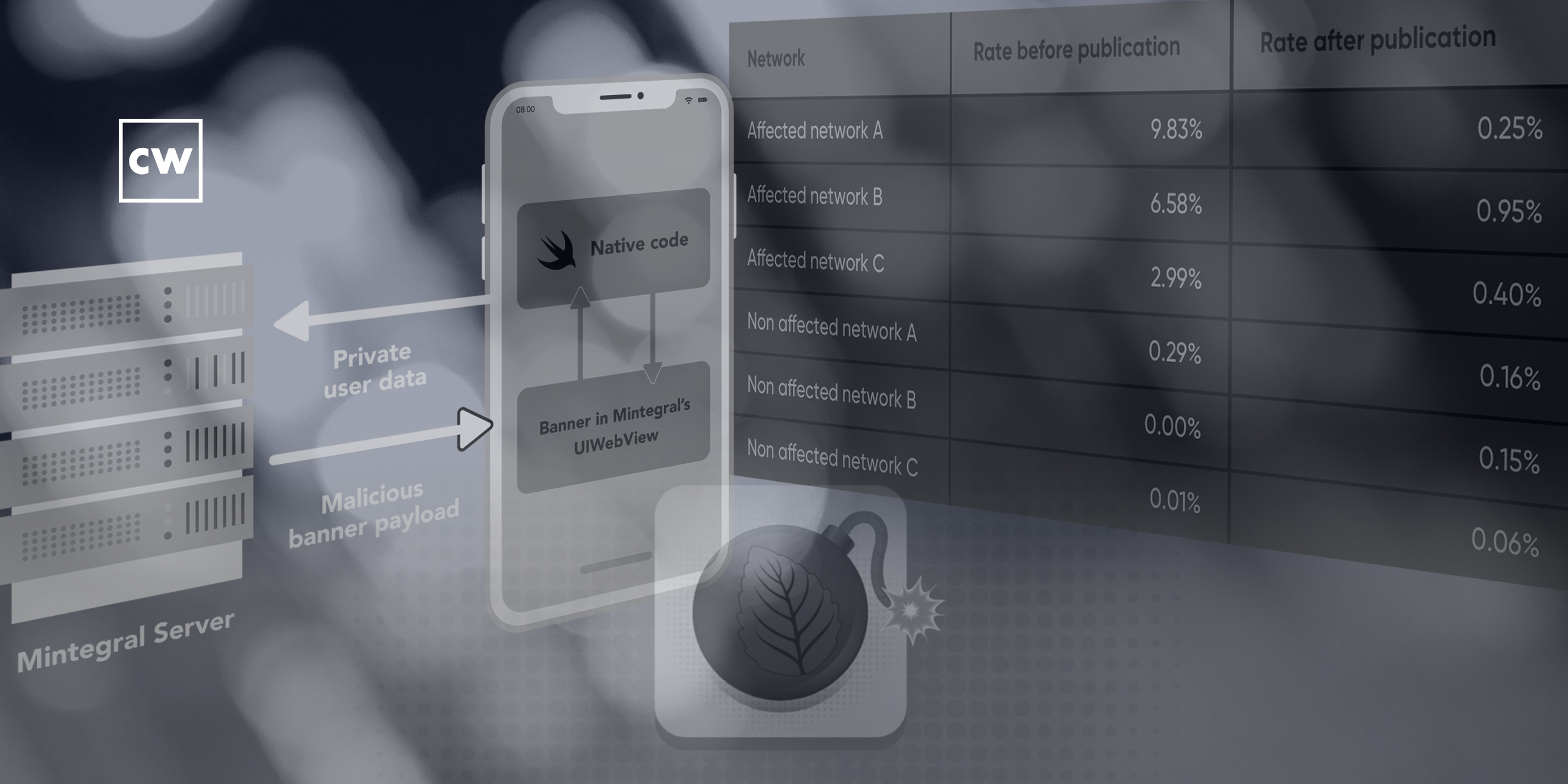

Alyssa Miller: What we saw in the SDK was that it was capturing those clicks regardless of whether it was a Mintegral SDK ad that had been served or if it was an ad that had been served by a different SDK. And then they were using that information on the backend to send through their own attribution claim for that click. So in other words, you would – a user might click on an ad from some other third party's ad network, but Mintegral would send to the ad attribution provider a claim saying that that click came from them.

Alyssa Miller: And with the way that most of these attribution providers work, it's the last click that gets the attribution if there's more than – if there's conflicting attribution. And working with providers in the space, what we saw was that indeed that was what they were doing, because there was this high number of – far more than what you would ever expect to see and far more than the nominal level that you would see of these conflicting ad attribution requests, where you had more than one, and in every instance it was going to Mintegral. And so, we were able to confirm that, yeah, they were definitely hijacking that attribution, which is basically taking money from other SDK owners and taking that attribution for themselves and making that money.

Alyssa Miller: But in searching for that, the other thing we found is that the functionality they used to do this didn't just capture ad clicks, but it captured all the clicks on any URL that anyone would conduct within that application. So, you could have potentially somebody clicking on a link to open up another website or to open up, say, a Google Doc or something like that, and Mintegral captures all that and they ship all that information off to their server. And so, yeah, now you think about that in terms of they were capturing not only the URL, but the HTTP headers, which HTTP headers oftentimes will contain auth tokens or things like that, which now is all being collected at this third-party Mintegral server. And so, obviously, we can't tell on the backend what Mintegral was doing with that information, right?

Dave Bittner: Right.

Alyssa Miller: I mean, they may only be using it to do this ad attribution fraud that we were able to confirm, or they could be leveraging all that data in any number of ways, considering now they're getting user traffic patterns, they might be getting auth tokens and other things. There's no telling what could be done with that data or if that data could end up getting exposed to a third-party hacker somewhere else.

Dave Bittner: Now, behind the scenes, when you all dug in, you learned that Mintegral was sort of covering their tracks? There were some things in the SDK to hide what they were doing.

Alyssa Miller: Yeah. So, a couple of things that we noticed right away. One that is kind of a key aspect right away was we found there was a number of pieces of functionality to detect whether the device was jailbroken and whether the device was running within a debugger. And so, right there, you know, and we could see that there was a shift in app behavior based on whether those particular occurrences were in play. And so, right there, that's a key sign, right? Why is an ad SDK so sensitive about jailbroken devices and debuggers? You expect to see that in a banking app. You don't expect to see that in an ad SDK.

Alyssa Miller: Mm-hmm.

Alyssa Miller: So, we started digging into that. That led us to find a number of functions within the application. Also, we found – this is where we found the command-and-control side of this, where each time the SDK is initialized, it goes to a Mintegral server and downloads a configuration, and that configuration turns on and off certain parts of functionality, including the jailbreak detection, the debugger detection, and so forth.

Alyssa Miller: So then we started to dig into these functions that we had found, and in those functions we found they were being obfuscated by what appeared to be Base64 encoding. Well, it actually turned out they had written their own proprietary encoding, kind of similar to Base64, but it was their own thing. And so we were able to break through that. Obviously, Base64 is not encryption, and so in this case theirs wasn't either. It was just an encoding that obfuscated it, and in some places they double applied it.

Alyssa Miller: But then when we started digging into that, that's where now we started to find these functions where it was reporting data back. It was using a couple methods that you really wouldn't typically see. It was using method swizzling to capture these URL clicks. And that's a sign right there – so, what method swizzling does is I can modify the underlying iOS SDK methods and objects with this method swizzling, so I can do that in runtime. And so that's how they were able to then access not only clicks within their own SDK, but clicks that originated elsewhere, because they were basically hijacking how iOS processes URL clicks in general within the context of that app.

Dave Bittner: Can you give me some insights – I mean, how does something like this make it through app review from Apple?

Alyssa Miller: So it's a couple of things I just mentioned, right? It's definitely – the obfuscation is a big one, because it does make it harder to tell what exactly is going on without getting pretty deep into disassembly of the code and really tracking through symbolic links and so forth. So there's a lot of work that has to happen just to be able to track all that back. And then the fact that they're using methods, swizzling, well, that happens at runtime. So just static-code analysis doesn't really tell you that, oh, this is what they're doing. They're going in, they're putting hooks into these standard iOS functions. Because it happens at runtime and it's all been obfuscated, the code that accomplishes that. And so, it's a difficult thing to detect – you know, you think about what Apple's doing with millions of apps every day that they're trying to verify for App Store inclusion or updates, it's not totally surprising that this was a method that they were able to leverage to get around that type of verification.

Dave Bittner: Now, one of the fascinating elements here is that the plot thickens. I mean, you all do responsible disclosure to Apple, and the folks at Mintegral, they open source the – or not open source, but they open up the code for folks to take a look at, and the story continues there.

Alyssa Miller: Yeah, so it's really interesting, right? So, what we ended up discovering – well, first of all, Mintegral of course denied everything, and Apple, for their part, kind of downplayed it too. You know, because we couldn't demonstrate that the end user was being impacted, Apple kind of wasn't really interested, you know, and they said as much in the media, they said, well, we can't confirm that user privacy is being impacted here because we couldn't say what happened to this data once it went to Mintegral's servers.

Alyssa Miller: So, from that perspective, you know, Mintegral says, hey, we're not doing anything, this is all false. But then they went and they changed their privacy policy – they made significant changes to their privacy policy. They made significant changes to their code base, released a new version, and then, like you said, they actually open source – they announced they were open-sourcing their code. Which, well, not quite, because you still have to be a Mintegral subscriber to get their SDK, but it's a little more open than it was before.

Alyssa Miller: So, what we were ultimately able to do, though, was we were able to compare the functionality that existed before the update and then after that update, and that led us to find additional findings of potentially malicious activity that could occur through that particular SDK. So, one of the big ones that we found was a potential for remote code execution through the ads that were being served themselves. I mean, it was just by analyzing the previous code and finding more things that had been obfuscated, that because of that obfuscation, we didn't find in our initial research. So, we were able to see how, you know, JavaScript could be included in an ad, and then that could be used to actually execute code within the application just by the serving of a particular ad.

Dave Bittner: And now, am I correct that they went ahead and sort of reverted some of the code back again after a few weeks?

Alyssa Miller: We did see some of that as well, that, yeah, I mean, certain components of the app returned, in particular some of that obfuscation, some of that the jailbreak detection, and so forth. So, again, things that kind of get you looking and get you suspicious, because why would this be in a, you know, an SDK for ad-serving? It just doesn't make a lot of sense. And so, we also did find that they were doing even to a greater degree of URL tracking. We saw that that had been re-enabled, and so now, again, they were looking at URL clicks across the application, not just within their SDK. So, yeah, we did see that that came back as well.

Dave Bittner: What's the overall response been in the community? Now that you've got the word out, your research has been published, notices have been sent out to the folks who've used this SDK – what's the response been?

Alyssa Miller: So I think people stood up and noticed with that second set of findings, because not only did we publish additional iOS findings, but we found the same behaviors happening in Android. And in fact, we found deeper levels of concern in Android. So, the community response has been pretty significant. There are a number of providers who remove the Mintegral SDK from their SDKs. So, we saw blogs about it from providers as well. We even saw Apple release a notice to owners of applications that had the Mintegral SDK letting them know that they would need to update or remove the SDK. There definitely was a greater response overall from the community with that second publication.

Dave Bittner: Now, is it fair to say that folks could still have these apps on their devices that are using this SDK? If I installed something a while ago, a previous version, could it still be buried within an app? I suppose it's hard – there's really no practical way to know.

Alyssa Miller: Yeah, from a user perspective, it is difficult, because you're really reliant on the app owner to have seen this information and updated the – either updated the Mintegral SDK or removed it altogether. And so, from a user perspective, you don't really get to see who the app provider is. From the Apple perspective, you're probably a little safer on iOS because, as I said, Apple did reach out to their developers and their app owners and made it clear that they needed to either update that SDK or remove it altogether to get rid of this functionality. I don't know how common or how strong the presence of Mintegral still is out there following a lot of this. Again, seeing them removed from a number of high-profile ad networks and providers obviously impacted them significantly, I'm sure. But yes, there is still that potential that, from a user perspective, you could have one of these apps on your phone that still has this capability within it.

Alyssa Miller: From that perspective for a user, the biggest concern is, again, that that privacy exposure user probably doesn't care about who's getting the ad revenue, so the ad hijacking that was going on, that's not as big of a concern for a user. But certainly not knowing what Mintegral's doing with all this data that they are collecting, that's concerning. And unfortunately, there's not a good answer from a user perspective, which is why we work within the dev community to try to make developers aware of it. We certainly added this as a new vulnerability to our vulnerability database for SNYK, so developers who are using SNYK software composition analysis are able to identify that this SDK is present in their code. Apple is doing their part. Google is doing their part by sending out the broad notification on this. But, yeah, that ultimately is where it has to come from. It has to be the app owners, the app developers, that really make this change and take care of it.

Dave Bittner: From a developer's point of view, I mean, in a case like this, is it really that reputational thing, that, you know, exchanging information with my colleagues, with my co-workers, being a part of the community to know when something like this is going on?

Alyssa Miller: Yeah, I mean, ultimately, there's only a few answers to really be aware of this. One is just having that awareness, right? Like you said, being involved in the community and knowing that this is something that's occurred, something that's been disclosed. Obviously, using tooling that's able to identify things like this, which again, would center mostly around software composition analysis, something that's actually looking through your dependencies and identifying known vulnerabilities that exist within them.

Alyssa Miller: You know, there's not a lot of good answers outside of that. It's probably not reasonable to expect a developer to sit down and go through the source code of every SDK they include in their applications. And unfortunately, things even like source code analysis tools probably wouldn't have captured something like this, again, just due to the level of obfuscation that was being used.

Dave Bittner: Yeah. I mean, we use SDKs to save time, right?

Alyssa Miller: Save time and, yeah, in this case, to really enable just the monetization of your app. You got app maintainers who spend a lot of time and effort creating very fun and popular applications, and they want to make some money from that, and users don't like to pay for applications, so what other choice do you have? You serve up ads. And so, the easiest way to get into that, by far, is to get involved with an ad network and load their SDK into your app.

Dave Bittner: Yeah.

Alyssa Miller: This is a difficult situation. It's very challenging to understand a lot of the nuances of it. It gets you pretty deep into ad networks and so forth. And so, this is why just continued research in the open-source space is so important. We see it from commercial companies, more and more, you know, companies like SNYK. We also see it happening more in the academic space as well. And I think those are good trends that we need to continue. We need to really continue the overall efforts to try to identify malicious activity within the open-source market. And the more that open-source spaces become kind of our own police, I think the better that our security posture will get around things like this.

Dave Bittner: Our thanks to Alyssa Miller from SNYK for joining us. The research is titled, "SourMint: iOS remote code execution, Android findings, and community response." We'll have a link in the show notes.

Dave Bittner: The CyberWire Research Saturday is proudly produced in Maryland out of the startup studios of DataTribe, where they're co-building the next generation of cybersecurity teams and technologies. Our amazing CyberWire team is Elliott Peltzman, Puru Prakash, Stefan Vaziri, Kelsea Bond, Tim Nodar, Joe Carrigan, Carole Theriault, Ben Yelin, Nick Veliky, Gina Johnson, Bennett Moe, Chris Russell, John Petrik, Jennifer Eiben, Rick Howard, Peter Kilpe, and I'm Dave Bittner. Thanks for listening.